Building a Multi-Agent Advertising Engine: A Step-by-Step Guide

Overview

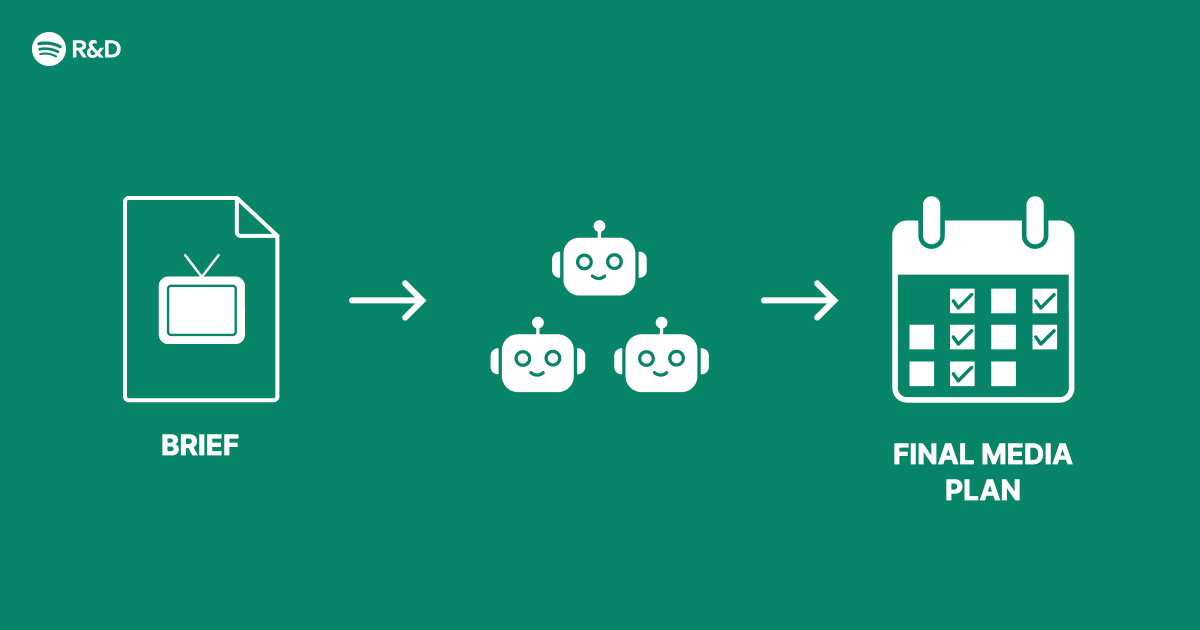

Modern digital advertising demands more than simple rule-based targeting. To deliver smarter, more adaptive campaigns, many organizations are turning to multi-agent architectures—systems where multiple specialized AI agents collaborate to analyze data, make decisions, and optimize results. This guide walks through the design and implementation of such a system, inspired by real-world challenges in advertising at scale. We focus on solving structural inefficiencies rather than just adding a single AI feature. The result: a modular, resilient advertising engine that can learn and adapt in real time.

Prerequisites

Before you begin, ensure you have the following:

- Programming skills: Proficiency in Python or Java, with experience in message queues and distributed systems.

- Infrastructure: Access to a cloud platform (AWS, GCP, Azure) with Kubernetes or similar orchestration.

- Data pipeline: Stream processing tools like Apache Kafka or RabbitMQ for inter-agent communication.

- ML fundamentals: Understanding of reinforcement learning, multi-agent coordination, and basic neural networks.

- Advertising domain knowledge: Familiarity with ad auctions, bidding strategies, CTR/CVR prediction, and audience segmentation.

Step-by-Step Instructions

1. Define Agent Roles and Responsibilities

Identify the core tasks your advertising system must handle: budget allocation, creative selection, audience targeting, real-time bidding, and performance monitoring. Each task becomes an agent. For example:

- Budget Agent: Manages daily or campaign-level spend.

- Bidding Agent: Determines bid price per auction.

- Creative Agent: Chooses the best ad copy/asset.

- Targeting Agent: Decides which user segments to target.

- Analytics Agent: Collects outcomes and feeds back signals.

Assign each agent a distinct objective and a shared goal (e.g., maximize ROI or conversion volume). Document the interfaces and data each agent needs.

2. Design Inter-Agent Communication Protocols

Agents must exchange information without creating bottlenecks. Use a publish‑subscribe model with a message broker. Define common message schemas (e.g., JSON with fields like user_id, auction_context, bid_response).

// Example Kafka message schema

{

"event_type": "auction_start",

"auction_id": "auction_123",

"user": { "id": "u456", "age": 30, "device": "mobile" },

"context": { "time_of_day": 14, "site": "news" }

}Use separate topics per agent type to avoid content contention. Include a control topic for agent health checks and configuration updates.

3. Implement the Core Agent Logic

Each agent runs as an independent microservice. Write the core decision-making loop. Below is a simplified Python skeleton for a bidding agent using a reinforcement learning model:

import random

from reinforcement_learning import QNetwork

class BiddingAgent:

def __init__(self):

self.q_network = QNetwork()

self.budget_remaining = 1000

def decide_bid(self, auction_context):

state = self.encode_state(auction_context)

action = self.q_network.choose_action(state) # returns bid multiplier

bid_price = auction_context['floor_price'] * action

return min(bid_price, self.budget_remaining)

def learn(self, auction_result):

state, action, reward, next_state = auction_result

self.q_network.update(state, action, reward, next_state)

def encode_state(self, context):

# Convert context to feature vector

return [context['ctr_estimate'], context['budget_left'], context['hour']]

Repeat similar patterns for other agents, tailoring the model (or rule‑based logic) to each role.

4. Set Up a Shared Knowledge Base

Agents need a centralized store for shared state—for example, current budget status, campaign goals, and learned model parameters. Use a fast key‑value store (Redis) or a distributed database (DynamoDB). Agents write their outputs (e.g., bid decisions, selected creatives) to this store after each auction cycle.

5. Create a Coordination Mechanism

To prevent conflicting decisions (e.g., spiking spend simultaneously), implement a coordinator agent or a distributed lock for critical actions. A simple approach: use a consensus round where agents vote on high‑impact decisions. For efficiency, use a lightweight protocol like Paxos or Raft (via etcd) only for state transitions (e.g., switching campaign strategy).

# Pseudocode for coordination lock

if coordinator.acquire_lock('budget_allocation'):

new_budget_plan = compute_plan(agent_reports)

coordinator.release_lock()

broadcast(new_budget_plan)

else:

wait_and_retry()6. Implement Feedback Loops

Each agent should observe the outcomes of its actions. The Analytics Agent collects performance metrics (impressions, clicks, conversions) and publishes them back to the message bus. Agents subscribe to their relevant metrics and update their internal models. For example, the Bidding Agent uses reinforcement learning to adjust bid strategies based on win rate and cost per conversion.

7. Deploy and Monitor

Package each agent as a Docker container and deploy using Kubernetes. Create Helm charts for reproducible deployments. Use service mesh (e.g., Istio) to handle traffic routing and observability. Set up dashboards (Grafana) to monitor latency, decision times, agent health, and overall advertising KPIs. Include alerts for agent failures or unexpected drift.

Test the system end‑to‑end using historical ad logs. Gradually roll out to production traffic with a canary deployment.

Common Mistakes

- Over‑engineering agents: Starting with too many agents introduces complexity. Begin with two or three and expand only when needed.

- Ignoring tail latency: If one agent takes too long to decide, the whole auction may be missed. Use timeouts and fallback decisions.

- Tight coupling: Avoid direct HTTP calls between agents; always use asynchronous message passing to maintain scalability.

- Forgotten cold start: Agents that rely on learned models need initial training. Pre‑train with historical data or use rule‑based fallbacks until models converge.

- Missing conflict resolution: When two agents both control a shared resource (e.g., budget), define priority rules or use a mediator agent.

- Inefficient communication: Sending duplicate data or large payloads can saturate the message bus. Keep messages minimal and aggregate where possible.

Summary

This guide presented a modular approach to building a multi-agent advertising engine. By decomposing advertising tasks into specialized agents, using asynchronous communication, and embedding feedback loops, you can create a system that adapts smarter and faster than monolithic solutions. Start small, validate coordination, and iterate based on real performance data. The result is a scalable architecture that turns advertising into an ongoing optimization process.

Related Articles

- Why Historical Accuracy Makes This Drama Unforgettable

- Web Designers Urged to Foster Amiability: Lessons from 1930s Vienna Circle

- Unlock the Hidden Powers of Your Google Search Widget

- Pixel 11 Leaks: Hopes and Hidden Worries

- Supply Chain Attack Uses Poisoned Ruby Gems and Go Modules to Steal Credentials via CI Pipelines

- Building a Multi-Agent AI System for Next-Gen Advertising

- Revolutionizing Enterprise Marketing: How Agentic AI from Adobe, NVIDIA, and WPP Drives Personalized Content at Scale

- Hidden Power: Android Widgets Unveil Secret Buttons When Resized